How do I get dumps for Microsoft 70-767 exam? Examsdemo shares the latest and effective Microsoft 70-767 exam questions and answers, online practice tests, and the most authoritative Microsoft exam experts update 70-767 exam questions throughout the year. Get the full 70-767 exam dumps selection: https://www.leads4pass.com/70-767.html (389 Q&As). Pass the exam with ease!

Table of Contents:

- Latest Microsoft 70-767 google drive

- Effective Microsoft 70-767 exam practice questions

- Related 70-767 Popular Exam resources

- leads4pass Year-round Discount Code

- What are the advantages of leads4pass?

Latest Microsoft 70-767 google drive

[PDF] Free Microsoft 70-767 pdf dumps download from Google Drive: https://drive.google.com/open?id=1g3QEW1uPcqIlBwMIvz9T-l1iYNIZF7hJ

Exam 70-767: Implementing a Data Warehouse using SQL:https://www.microsoft.com/en-us/learning/exam-70-767.aspx

Skills measured

This exam measures your ability to accomplish the technical tasks listed below.

- Design, implement and maintain a data warehouse (35–40%)

- Extract, transform and load data (40–45%)

- Build data quality solutions (15–20%)

Who should take this exam?

This exam is intended for extract, transform, and load (ETL) and data warehouse developers who create business intelligence (BI) solutions.

Their responsibilities include data cleansing, in addition to ETL and data warehouse implementation.

Latest updates Microsoft 70-767 exam practice questions

QUESTION 1

You are creating a Data Quality Services (DQS) solution. You must provide statistics on the accuracy of the data.

You need to use DQS profiling to obtain the required statistics.

Which DQS activity should you use?

A. Cleansing

B. Knowledge Discovery

C. SQL Profiler

D. Matching Rule Definition

Correct Answer: A

QUESTION 2

You plan to use the dtutil.exe utility with Microsoft SQL Server Integration Services (SSIS) to customize packages. You

need to create a new package ID for package1 on Server1. Which dtutil.exe command should you run?

A. dtutil.exe /FILE c:\repository\packagel.dtsx /DestServer Server! /COPY SQL;package1.dtsx

B. dtutil.exe /I /FILE c:\repository\packagel.dtsx

C. dtutil.exe /SQL package1 /COPY OTS;c:\repository\package1.dtsx

D. dtutil.exe /SQL package1 /DELETE

Correct Answer: A

QUESTION 3

You are designing a SQL Server Integration Services (SSIS) data flow to load sales transactions from a source system

into a data warehouse hosted on Windows Azure SQL Database. One of the columns in the data source is named

ProductCode.

Some of the data to be loaded will reference products that need special processing logic in the data flow.

You need to enable separate processing streams for a subset of rows based on the source product code.

Which Data Flow transformation should you use?

A. Multicast

B. Conditional Split

C. Destination Assistant

D. Script Task

Correct Answer: B

Explanation: We use Conditional Split to split the source data into separate processing streams.

A Script Component (Script Component is the answer to another version of this question) could be used but this is not

the same as a Script Task.

QUESTION 4

You are implementing a Microsoft SQL Server data warehouse with a multi-dimensional data model.

Orders are stored in a table named Factorder. The addresses that are associated with all orders are stored in a fact

table named FactAddress. A key in the FoctAddress table specifies the type of address for an order.

You need to ensure that business users can examine the address data by either of the following:

shipping address and billing address

shipping address or billing address type Which data model should you use?

A.

star schema

B.

snowflake schema

C.

conformed dimension

D.

slowly changing dimension (SCD)

E.

fact table

F.

semi-additive measure

G.

non-additive measure

H.

dimension table reference relationship

Correct Answer: H

QUESTION 5

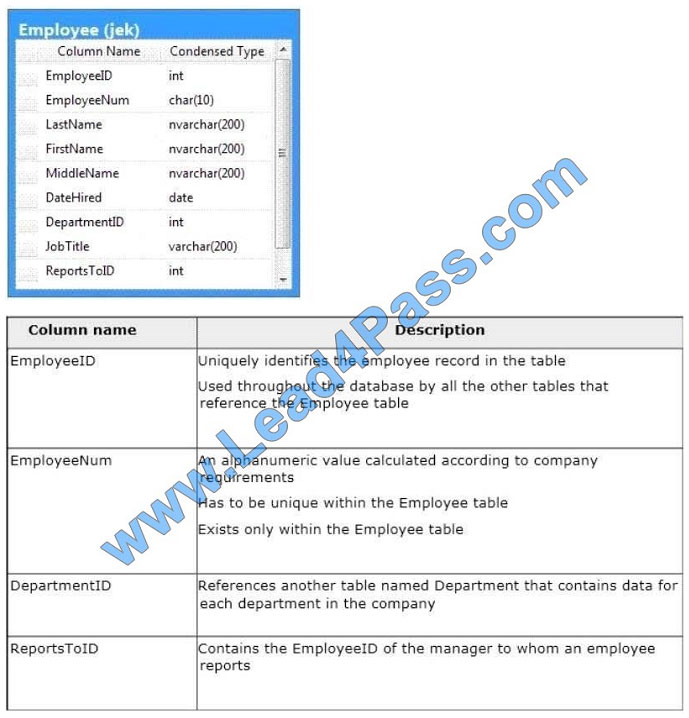

You administer a Microsoft SQL Server 2016 database. The database contains a table named Employee. Part of the

Employee table is shown in the exhibit. (Click the Exhibit button.)

Confidential information about the employees is stored in a separate table named EmployeeData. One record exists

within EmployeeData for each record in the Employee table. You need to assign the appropriate constraints and table

properties to ensure data integrity and visibility. On which column in the Employee table should you use an identity

specification to include a seed of 1,000 and an increment of 1?

A. DateHired

B. DepartmentID

C. EmployeeID

D. EmployeeNum

E. FirstName

F. JobTitle

G. LastName

H. MiddleName

I. ReportsToID

Correct Answer: C

QUESTION 6

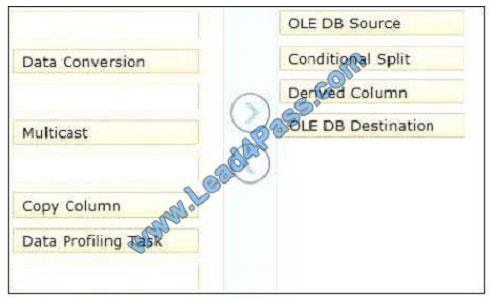

You are creating a SQL Server Integration Services (SSIS) package to populate a fact table from a source table. The

fact table and source table are located in a SQL Azure database. The source table has a price field and a tax field. The

OLE

DB source uses the data access mode of Table.

You have the following requirements:

The fact table must populate a column named TotalCost that computes the sum of the price and tax columns.

Before the sum is calculated, any records that have a price of zero must be discarded.

You need to create the SSIS package in SQL Server Data Tools.

In what sequence should you order four of the listed components for the data flow task? (To answer, move the

appropriate components from the list of components to the answer area and arrange them in the correct order.)

Select and Place:

Correct Answer:

QUESTION 7

You are using the Knowledge Discovery feature of the Data Quality Services (DQS) client application to modify an

existing knowledge base.

In the mapping configuration, two of the three columns are mapped to existing domains in the knowledge base. The

third column, named Group, does not yet have a domain.

You need to complete the mapping of the Group column.

What should you do?

A. Map a composite domain to the source column.

B. Create a composite domain that includes the Group column.

C. Add a domain for the Group column.

D. Add a column mapping for the Group column.

Correct Answer: C

QUESTION 8

You are designing a complex SQL Server Integration Services (SSIS) project that uses the Project Deployment model.

The project will contain between 15 and 20 packages. All the packages must connect to the same data source and

destination.

You need to define and reuse the connection managers in all the packages by using the least development effort.

What should you do?

A. Copy and paste the connection manager details into each package.

B. Implement project connection managers.

C. Implement package connection managers.

D. Implement parent package variables in all packages.

Correct Answer: B

QUESTION 9

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains

a unique solution that might meet the stated goals. Some question sets might have more than one correct solution,

while

others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not

appear in the review screen.

You plan to deploy a Microsoft SQL server that will host a data warehouse named DB1.

The server will contain four SATA drives configured as a RAID 10 array.

You need to minimize write contention on the transaction log when data is being loaded to the database.

Solution: You replace the SATA disks with SSD disks.

Does this meet the goal?

A. Yes

B. No

Correct Answer: B

A data warehouse is too big to store on an SSD.

Instead you should place the log file on a separate drive.

References:

https://docs.microsoft.com/en-us/sql/relational-databases/policy-based-management/place-data-and-log-files-on-separate-drives?view=sql-server-2017

QUESTION 10

You are designing a SQL Server Integration Services (SS1S) package that uploads a file to a table named Orders in a

SQL Azure database.

The company\\’s auditing policies have the following requirements:

Correct Answer: C

Reference: http://msdn.microsoft.com/en-us/library/ms140223.aspx

QUESTION 11

You are developing a SQL Server Integration Services (SSIS) package.

The package sources data from an HTML web page that lists product stock levels.

You need to implement a data flow task that reads the product stock levels from the HTML web page.

Which data flow sources should you use? Select Two

A. Raw File source

B. XML source

C. Custom source component

D. Flat File source

E. script component

Correct Answer: CE

QUESTION 12

Note: This question is part of a series of questions that use the same or similar answer choices. An answer choice may

be correct for more than one question in the series. Each question is independent of the other questions in this series.

Information and details provided in a question apply only to that question.

You have a database named DB1.

You need to track auditing data for four tables in DB1 by using change data capture.

Which stored procedure should you execute first?

A. catalog.deploy_project

B. catalog.restore_project

C. catalog.stop_operation

D. sys.sp_cdc_add_job

E. sys.sp_cdc_change_job

F. sys.sp_cdc_disable_db

Correct Answer: D

Because the cleanup and capture jobs are created by default, the sys.sp_cdc_add_job stored procedure is necessary

only when a job has been explicitly dropped and must be recreated.

Note: sys.sp_cdc_add_job creates a change data capture cleanup or capture job in the current database. A cleanup job

is created using the default values when the first table in the database is enabled for change data capture. A capture

job

is created using the default values when the first table in the database is enabled for change data capture and no

transactional publications exist for the database. When a transactional publication exists, the transactional log reader is

used to

drive the capture mechanism, and a separate capture job is neither required nor allowed.

Note: sys.sp_cdc_change_job

References: https://docs.microsoft.com/en-us/sql/relational-databases/track-changes/track-data-changes-sql-server

QUESTION 13

You are designing a data warehouse hosted on Windows Azure SQL Database. The data warehouse currently includes

the dimUser and dimRegion dimension tables and the factSales fact table. The dimUser table contains records for each

user permitted to run reports against the warehouse, and the dimRegion table contains information about sales regions.

The system is accessed by users from certain regions, as well as by area supervisors and users from the corporate

headquarters.

You need to design a table structure to ensure that certain users can see sales data for only certain regions. Some

users must be permitted to see sales data from multiple regions.

What should you do?

A. For each region, create a view of the factSales table that includes a WHERE clause for the region.

B. Create a userRegion table that contains primary key columns from the dimUser and dimRegion tables.

C. Add a region column to the dimUser table.

D. Partition the factSales table on the region column.

Correct Answer: B

Related 70-767 Popular Exam resources

leads4pass Year-round Discount Code

What are the advantages of leads4pass?

leads4pass employs the most authoritative exam specialists from Microsoft, Cisco, CompTIA, Oracle, EMC, etc. We update exam data throughout the year. Highest pass rate! We have a large user base. We are an industry leader! Choose leads4pass to pass the exam with ease!

Summarize:

It’s not easy to pass the Microsoft 70-767 exam, but with accurate learning materials and proper practice, you can crack the exam with excellent results. https://www.leads4pass.com/70-767.html provides you with the most relevant learning materials that you can use to help you prepare.